Reconstructing Physical AI Decisions: Why State Matters More Than Models

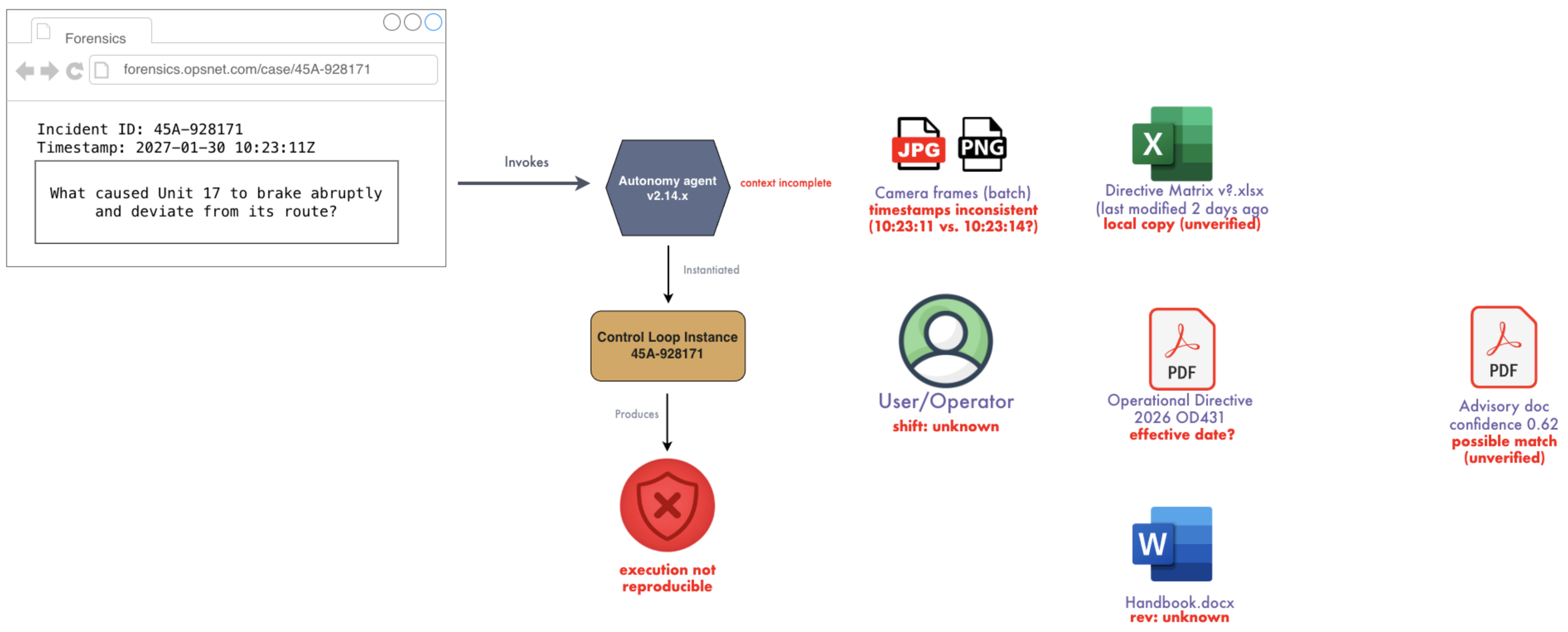

Modern AI systems are increasingly capable of making decisions in the real world. What they are far less capable of doing is explaining those decisions in a way that holds up after something goes wrong. When a vehicle deviates from its path or a robot behaves unexpectedly, the instinct is to reconstruct what happened. After all, we have logs, models, and data. It should be straightforward.

In practice, it is not.

The central issue is that the most important element of all is missing: the exact state the system was operating on at the moment the decision was made.

Physical AI failures are often framed as model failures, but this framing is misleading. In most real-world cases, they are system failures. A decision is rarely the result of a model in isolation. It emerges from a combination of sensor inputs, intermediate transformations, external documents, operational directives, human context, and—critically—timing. The system’s behavior reflects what it “saw” and “believed” at a specific moment in time, not what we can later reconstruct from partial evidence.

Why Reconstruction Fails

The problem is that modern systems process state, but they do not preserve it. State becomes fragmented across edge devices, cloud services, pipelines, and storage layers. By the time an investigation begins, the original system no longer exists in its original form. What remains are traces: logs, metrics, and artifacts. These are useful, but they are not sufficient. They describe events, not the full environment in which those events occurred.

This fragmentation is compounded by the divergence between edge and cloud environments. Edge systems operate on delayed, filtered, and sometimes incomplete data. Cloud systems, by contrast, operate on aggregated and often corrected data. When we attempt to reconstruct a decision from the cloud, we are frequently reconstructing a version of reality that the system itself never experienced.

Logs, while essential, illustrate this limitation clearly. They provide a timeline of events, but not a snapshot of the system’s state at any given moment. They cannot tell us what information was missing, what signals were delayed, or what assumptions were implicitly made. A timeline without a corresponding state is not reconstruction—it is inference.

Vector representations introduce a different but equally important limitation. They capture semantic meaning effectively, but they discard the structural and temporal context that gives that meaning significance in a decision. They preserve similarity, but not causality, ordering, or timing. As a result, they are useful for understanding “what something is like,” but not for understanding “what actually happened.”

Explainability tools attempt to fill this gap, but they operate under assumptions that rarely hold in real-world systems. They assume complete inputs, correct signals, and stable context. What they produce is a plausible explanation of what a model would do under idealized conditions—not a faithful reconstruction of what the system experienced at the time.

A Different View

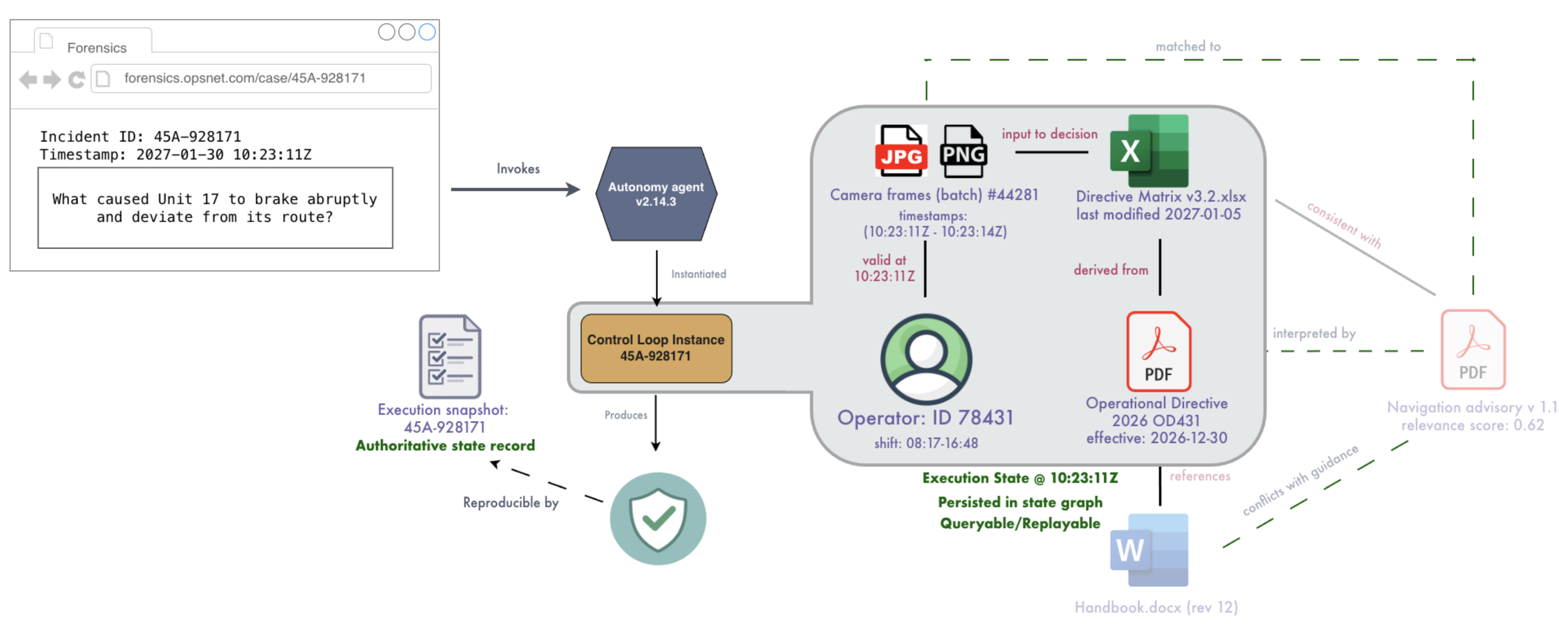

The problem, then, must be reframed. The key question is not why the model made a decision. It is what state the system was operating on when that decision occurred. True auditability requires the ability to reproduce that state, not merely to explain it after the fact.

To achieve this, state must become a first-class concern within the system. It must be structured as connected objects, with relationships explicitly preserved. It must be versioned so that every element is aligned in time. And it must be complete, with no hidden dependencies that only exist implicitly within code or external systems. Most importantly, it must be captured as the system operates, not reconstructed afterward.

A Low Risk Technique

This does not require replacing existing systems of record. In practice, the most effective approach is to introduce a layer that runs alongside the existing infrastructure, ingesting relevant signals, assets, and metadata as they flow through the system. This layer does not compete with operational databases or pipelines; instead, it connects them, preserving the relationships and timing necessary to reconstruct state later. Because it operates in parallel, it can be adopted incrementally, without disrupting the systems already in place.

Within such an approach, every decision becomes bound to a fully version-pinned context: the exact model version, the precise inputs, the specific assets involved, and the relationships between them. Nothing is left as “latest,” and nothing is inferred implicitly. This makes it possible to move beyond approximation and toward deterministic replay.

A decision, in this model, is not a single point in time. It is a traversal across a connected set of data, assets, and transformations. Capturing the decision means capturing that traversal in full. When the traversal is preserved, the decision can be replayed exactly as it occurred, using the same state and producing the same outcome.

The system that maintains this connected, versioned representation of state effectively becomes the authoritative source for playback. It allows investigators to query not just what happened, but why—grounded in the actual conditions of execution. Because it sits alongside existing systems, it can ingest data from them without requiring those systems to change, while still enabling deep, cross-cutting analysis through modern query and AI techniques.

What this Means

The result is a shift from guesswork to evidence. With full state preservation, decisions can be replayed, failures can be debugged precisely, and the boundary between system errors and model errors becomes clear. Behavior can be demonstrated and verified, rather than inferred.

As physical AI systems become more prevalent, the consequences of failure become more tangible. This raises the bar for accountability, auditability, and reproducibility. Each of these ultimately depends on a single capability: the ability to reconstruct state.

Physical AI does not fail because models are insufficient. It fails because state is lost. Reconstructing that state is therefore not an optional enhancement—it is a foundational requirement for building systems that can be trusted in the real world.